Machine Learning Framework PyTorch Enabling GPU-Accelerated Training on Apple Silicon Macs - MacRumors

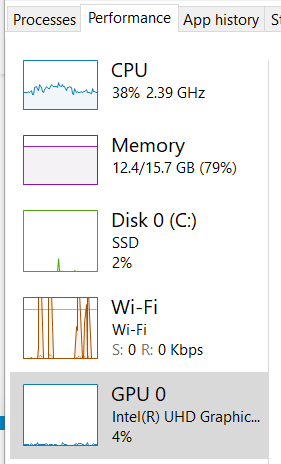

Use GPU in your PyTorch code. Recently I installed my gaming notebook… | by Marvin Wang, Min | AI³ | Theory, Practice, Business | Medium

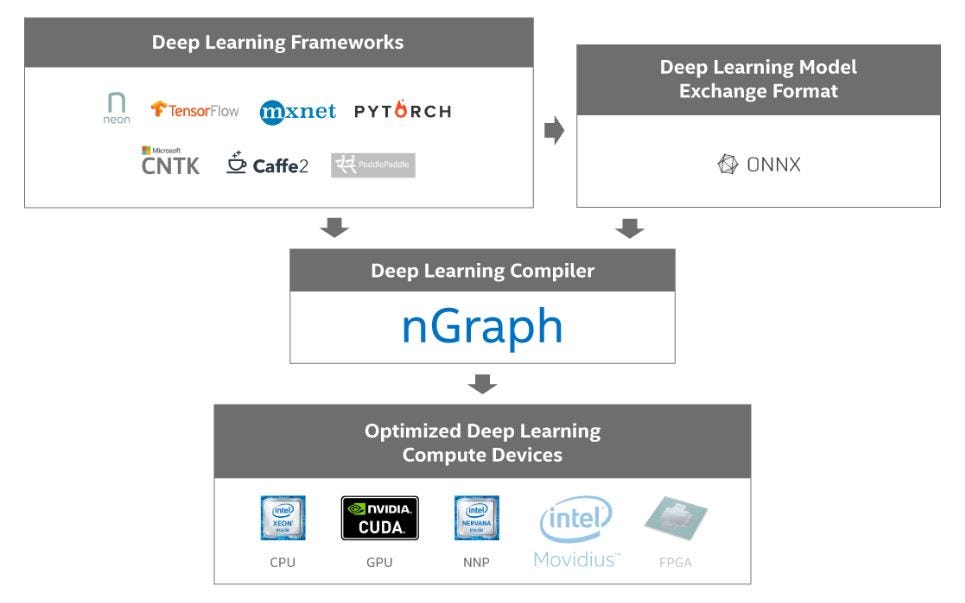

Differentiating PyTorch from all other Deep Learning frameworks | by Robin Familara | Udacity PyTorch Challengers | Medium

![P] PyTorch M1 GPU benchmark update including M1 Pro, M1 Max, and M1 Ultra after fixing the memory leak : r/MachineLearning P] PyTorch M1 GPU benchmark update including M1 Pro, M1 Max, and M1 Ultra after fixing the memory leak : r/MachineLearning](https://external-preview.redd.it/mc-ZbvmfJRshBvv1SWUeIvZv_3uy-q5oj3h1zIvTob8.jpg?width=640&crop=smart&auto=webp&s=b86001bbcf01c6b27a2188e4d701cc30efeca422)

P] PyTorch M1 GPU benchmark update including M1 Pro, M1 Max, and M1 Ultra after fixing the memory leak : r/MachineLearning

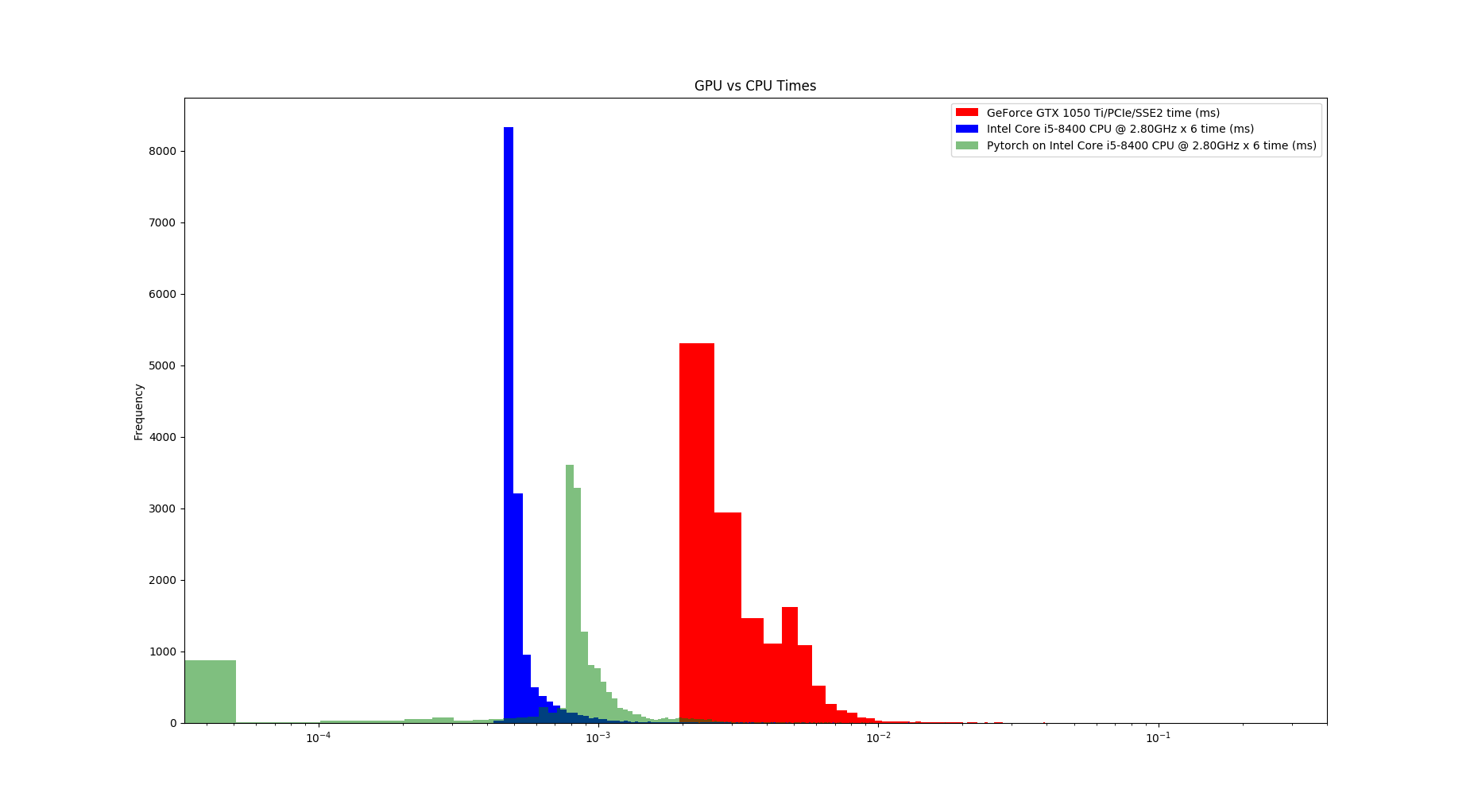

![D] My experience with running PyTorch on the M1 GPU : r/MachineLearning D] My experience with running PyTorch on the M1 GPU : r/MachineLearning](https://preview.redd.it/p8pbnptklf091.png?width=1035&format=png&auto=webp&s=26bb4a43f433b1cd983bb91c37b601b5b01c0318)